I Didn't Give AI My Notes. I Built It a Context Layer.

Apr 25, 2026 Somewhere in an early draft of the JDM book, I described Thomas Myers' Anatomy Trains system and that I wanted to focus on seven lines.

Somewhere in an early draft of the JDM book, I described Thomas Myers' Anatomy Trains system and that I wanted to focus on seven lines.

I know Myers has twelve.

Any bodyworker who knows his work would catch it on page one. I hoped it would make them pause and think and go look at his work. Though it could also read as exactly the kind of error that happens when someone uses AI to write about a subject they half-understand — and publishes without checking. The irony would be particular: a book about precision in bodywork, imprecise about one of its foundational sources.

The error was caught. But more importantly — the way it was fixed is the reason the book exists at all.

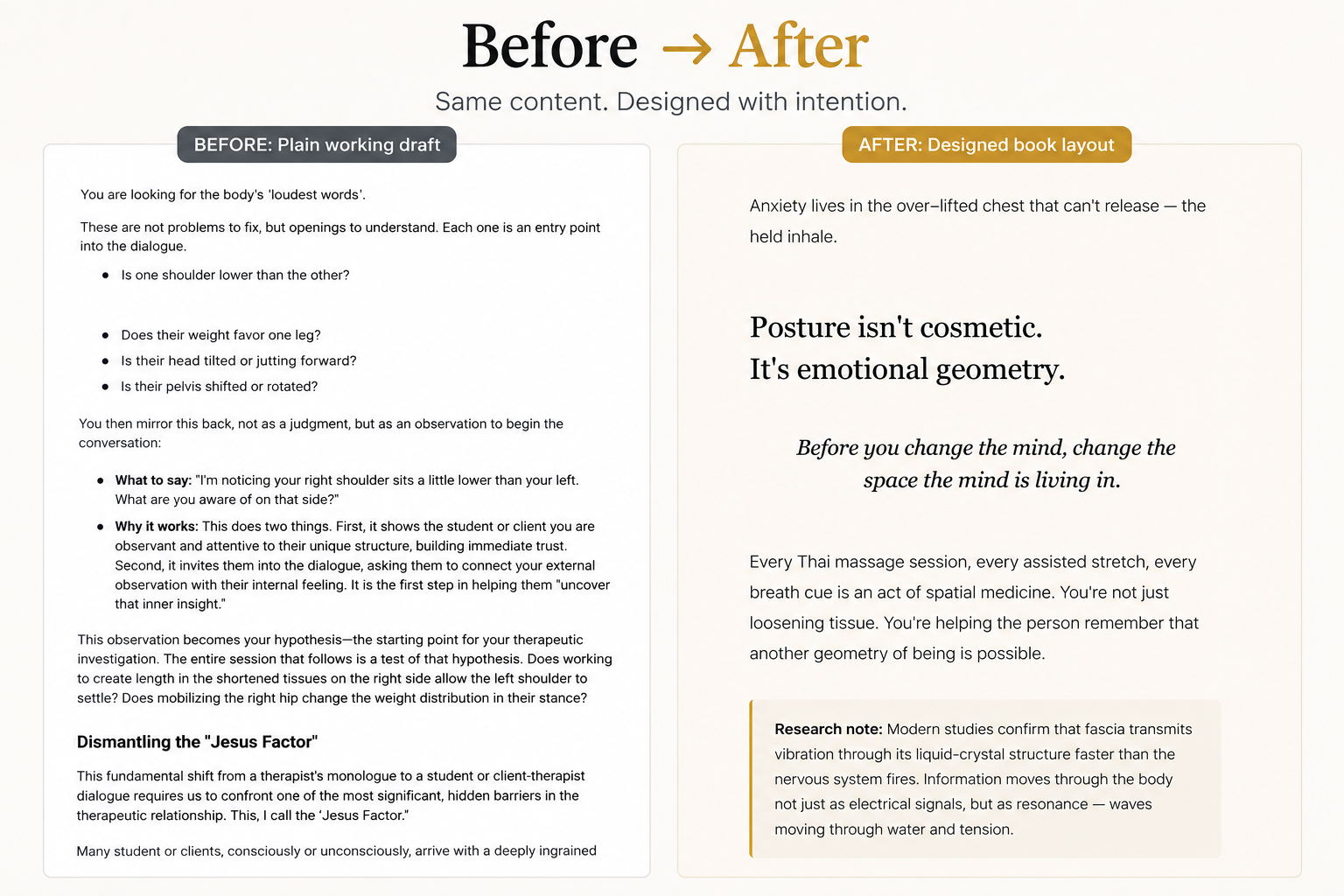

I didn't hand an AI a prompt that said fix the Anatomy Trains section. I gave it a brief. A four page editorial document that named the exact problem, provided the correcting framing paragraph, described all twelve lines with accurate pathway anatomy in the book's clinical voice, and listed — line by line — what each section currently said and what it needed to say instead.

Here is how that brief opened:

The current draft refers to 7 Anatomy Trains lines. Thomas Myers' system has 12 individual lines (he names this explicitly in his teaching). Readers who know Myers' work — the primary audience for a serious bodywork book — will notice this discrepancy immediately. It currently reads as an oversimplification or error.

The fix is not to change the clinical approach (JDM still works across all 12) — it is to: correctly frame the full 12 in one clear introductory paragraph, replace or expand each line description with accurate, specific language, add the 5 missing lines, and keep the pedagogical simplicity of "7 families" — just be honest that each family contains sub-lines.

And then the brief gave the replacement paragraph — word for word, in the book's voice, ready to use:

Thomas Myers identifies twelve myofascial meridians — twelve continuous pathways through which fascial force is transmitted across the body. In JDM, I work across all twelve, organised into seven clinical families for practical clarity... I work with these families, not individual muscles. The question I am always asking is not which muscle is tight — it is which line is short, which is long, and where has the chain been interrupted?

The AI loaded the brief. The book was corrected. Not approximately — precisely. Because the brief was precise.

That is what this article is about.

The problem with deep knowledge and AI

There is a specific failure mode when practitioners with real expertise try to use AI to write about their work.

You give it your notes. Twenty years of clinical experience, three mapping systems, thousands of sessions. It reads everything and returns something that sounds like a wellness blog. Clear. Readable. Wrong in ways that are hard to point at.

Not because the technology failed. Because averaging is what language models do when context is thin. They find the center of everything you gave them and write from there. The center of Thai massage plus fascial science plus meridian theory plus thirty years of practice is exactly where the internet already lives. Generic.

I wrote a book that integrates three separate systems: Sen Sib lines from Thai traditional medicine, sixteen meridian circuits from Bob Cooley's work, and twelve myofascial lines from Thomas Myers. Three traditions, three vocabularies, two thousand years of practice and three decades of Western research. Each one could fill its own book. The synthesis — what JDM actually is — lives in the precise relationship between them.

Dumping that into a prompt returns the average of three traditions. The average is not the synthesis. The synthesis is the work.

The AI flatness problem is not a model problem. It is a context problem. The model has no way to know what is authoritative, what is peripheral, what is Gabe's voice and what is generic bodywork language — unless you build a layer that tells it. Precisely.

That's when I found Jake Van Clief Claude course, and everything changed for me.

Click here to check Claude Foundation

The architecture

Three separate project sandboxes. Each with its own governing file. Each reading from and writing to known locations.

Master Control (orchestrator) Reads across everything. Writes reference files, briefs, and handoffs. Does not write prose or generate images. Writing sandbox (My-Content-Ai/) Book editing, articles, podcast, email sequences. Has its own CLAUDE.md. Reads from reference folders. Writes to known output folders. Design sandbox (My-Cover-Designs/) All visual outputs: illustrations, covers, thumbnails. Has its own CLAUDE.md. Reads design briefs. Writes images to known output folders.The governing file for the writing sandbox opens like this:

# GabeYoga — My-Content-Ai Written content project. Podcast episodes, Instagram posts, and book/reference material live here. Who This Is For: Gabe Azoulay — yoga teacher, Thai yoga massage therapist, educator. Audience: yoga teachers and serious practitioners. Voice: teacher-to-teacher, direct, grounded, precise. No performance, no jargon.That file tells the writing session who it is, what it is doing, where everything lives, and what the rules are — before it touches a word. The design sandbox has an equivalent file for visual work. Each sandbox is isolated. The writing session cannot accidentally pick up a design file. The design session does not read the book. Each knows its lane, its sources, its outputs.

Inside the writing sandbox, the context layers were built bottom-up.

Layer 1 — Raw stories. The Pichest story. My brother's leukemia recovery. Not edited, not summarized. Raw transcripts, saved as their own files. The model reads these as source material. It is not asked to invent my stories. It is given the actual stories, in my actual voice, in the roughness they were spoken in.

Layer 2 — Reference documents. One master reference file per mapping system. Structured so a session can load it and immediately have complete, accurate clinical context without asking me anything. If the book draft and the reference file disagree, the reference file wins.

Layer 3 — The voice contract. Not a style guide. A contract. This is what the writing session reads before drafting anything:

Gabe's Voice: The core register: Personal and direct. Warm but precise. Not clinical, not woo. Like a teacher who has seen it all and is genuinely excited to share what actually works. He doesn't lecture. He notices. His best content starts from an observation: something he saw in a client, something he felt in a session, something he realized while teaching. Phrases that are distinctly Gabe:- "Pain is not the enemy — it's the request."- "The problem is never where the pain is."- "Dialogue, not monologue."- "The body remembers everything." Never write: "transform your life," "embark on a journey," "unlock your potential," "game-changer," wellness fluff.That file goes in before the first word. Generic output becomes structurally impossible — not because the model is trying harder, but because the context no longer permits it.

Layer 4 — Editing briefs. This is the layer the seven-line error revealed. A brief is not a prompt. A prompt says: improve this section. A brief says: here is what is wrong, here is the accurate content, here is the exact paragraph that replaces the current one, here is a line-by-line list of what to change in each subsection. Precise enough that a human editor could work from it without asking any questions.

The Anatomy Trains brief was four pages. It gave accurate pathway descriptions for all twelve lines. It noted specific errors in the current draft of each section. It gave clinical descriptions for each line, ready to use or adapt. It ended with a summary table:

Replace The framing paragraph — use the new "12 lines in 7 families" language Fix SBL — add sacrotuberous ligament; extend to brow ridge Fix SFL — add retinaculum detail; extend to mastoid Fix LL — make bilateral; add Y-shape at hip Fix SL — make bilateral; add rhomboid-to-serratus connection Expand Arm Lines — break into 4 individual entries: SFAL, DFAL, SBAL, DBAL Add Back Functional Line — currently missing entirely Fix DFL — add tongue/hyoid connection; add visceral-wrapping noteThe session loaded the brief. The book was corrected. The correction was precise because the brief was precise — not because the model figured it out.

Layer 5 — Design briefs. The formal handoff to the design sandbox. The design session reads this. It does not read the book. It reads what it needs: card format, color system, what each illustration must show. Thirty-eight anatomical illustration cards — Anatomy Trains lines, meridian circuits, Sen Sib lines — rendered across all three mapping systems in one consistent visual language, because the brief was precise.

What one reference file actually does

After the book was complete, I kept the reference files open.

The master JDM reference — the file the writing session reads first — powered the landing page for JDM sessions, the podcast episode briefs, the email sequences, every article in the Healing pillar, the Instagram posts, and this article.

Same file. Different outputs. The investment was made once.

This is the thing that surprises people when they see the system running. They expect content creation to be additive — more effort for more output. What the reference layer does is multiplicative. One thorough source file, built once, reduces every downstream writing task to a reading and filtering problem rather than a reconstruction problem.

The alternative — which most people experience — is each session starting from scratch. The model reads whatever you paste into it and writes from the center of that. The next session, the same. The voice drifts. Clinical accuracy drifts. The whole system requires the same corrections repeatedly.

That is not a prompting problem. That is an infrastructure problem.

The principle

Matthew Creamer calls it ICM — Interpretable Context Methodology. Context as a first-class asset, not something you stuff into a prompt.

In practice: every piece of knowledge lives in a deliberate place. Raw stories stay raw. Reference files are reference files. Editing briefs are editing briefs. Each layer knows what it is and is not asked to be something else.

Every session reads from known locations and writes to known locations. The writing sandbox reads from the reference folder. The design sandbox reads from the briefs folder. No hunting, no cross-contamination.

The architecture cannot drift because the structure does not permit drift.

This is the same principle JDM runs on. I do not give a client a general protocol. I build a context layer first: standing assessment, the language of sensation we will use, the specific pattern we are addressing. Only then does the session know what to do.

The model works identically. Give it a general question and it returns a general answer. Give it a context layer built from the specific details of your work — and it returns something only you could have written.

The JDM book is on Amazon. If you want to experience the method directly: gabeyoga.com/